Tag: Judging with Your Gut

-

How NOT To Do Law, Philosophy, and Neuroscience

I’ve just returned from the Understanding Humans through Neuroscience conference at the American Enterprise Institute, where I heard papers by Roger Scruton, Walter Sinnott-Armstrong, and Stephen Morse. What struck me was how mired the three papers were in defending against a certain kind of agency-undermining determinism that few people take seriously any more. All of…

-

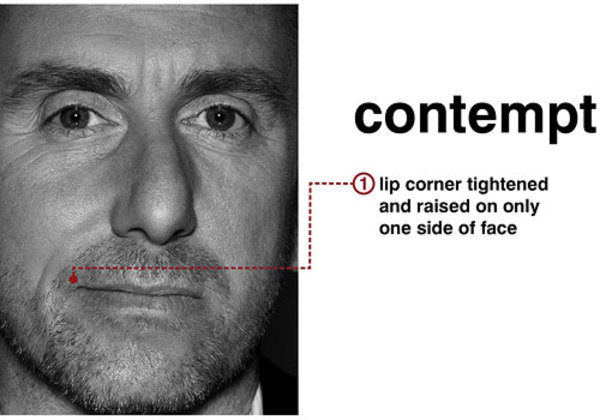

More on Contempt

A friend suggests that my recent arguments against the moral status of contempt ignored an important role it plays in policing our moral community. The concern is that if we cannot feel (and expect others to feel) contempt for someone like Bernie Madoff, then we will lose the morally instructive value of punishment. If we…

-

Advice

Ever since the markets became front page news, I’ve been caught in some sort of economics blog vortex. At this point, most of my reading is no longer directed towards macro-economic issues and institutional critique, but rather focuses on the economics department at George Mason. The problem is that it seems like these people really…

-

Should there be a place for disdain in our emotional lives?

In this post, I want to argue that disdain, contempt, and scorn have no moral place in our emotional lives. In short, my claim is that these emotions are immoral because they target persons and not actions, and they violate the principle of equality of persons. One can feel shame, anger, hatred, or envy in…

-

Appreciative Thinking

I’ve been having a debate on a friend’s Facebook page about the value of Martha Nussbaum’s work (I’m a fan) and serendipitously I found this post on “appreciative thinking” via Tyler Cowen. It’s a kind of inverted critical thinking, from Seth Roberts: When it comes to scientific papers, to teach appreciative thinking means to help…

-

Sex and Judgment

So in the last post, I showed how the initial versions of Christian judgment were remarkably modest and fallibilist with regard to other people. This makes a certain amount of sense, since Augustine was attached to a fairly rationalist theology, and always gave both doctrinal and basically ethical reasons for his judgments. (For instance, with…

-

After Phronesis

In the Confessions, Augustine argues that the capacity to judge is a capacity only available to those who have come to know God: “… we become new men in the image of our Creator. We gain spiritual gifts and can scrutinize everything—everything, that is, which is right for us to judge—without being subject, ourselves, to…